Tuesday, 29 November 2016

Green Millhouse - Temp Monitoring 1 - Hacking the BroadLink A1 Sensor

These devices, like those before them, have dubious reputations for "phoning home" to random Chinese clouds and being difficult and unreliable to set up. I can confirm!

The first problem is easily nipped in the bud with some judicious network configuration, as I outlined last time. The device works just as well when isolated from the outside world, so there is nothing to fear there.

The second problem is real. Luckily, it's as if they know the default device-finding process will fail (which it did for me the half-dozen times I tried), and they actually support and document an alternative scheme ("AP Mode") which works just fine. Just one thing though - this device seems to have NO persisted storage of its network settings (!) which probably means you'll be going through the setup process a few times - you lose power, you lose the device. Oy.

So once I had the sensor working with its (actually quite decent) Android app, it was time to start protocol-sniffing, as there is no existing binding for this device in OpenHAB. It quickly became apparent that this would be a tough job. The app appeared to use multicast IP to address its devices, and a binary protocol over UDP for data exchange.

Luckily, after a bit more probing with WireShark and PacketSender, the multicast element proved to be a non-event - seems like the app broadcasts (i.e. to 255.255.255.255) and multicasts the same discovery packet in either case. My tests showed no response to the multicast request on my network, so I ignored it.

Someone did some hacks around the Android C library (linked from an online discussion about BroadLink devices) but all my packet captures showed that encryption is being employed (for reasons unknown) and inspection confirms encryption is performed in a closed-source C library that I have no desire to drill into any further.

A shame. The BroadLink A1 sensor is a dead-end for me, because of their closed philosophy. I would have happily purchased a number of these devices if they used an open protocol, and would have published libraries and/or bindings for OpenHAB etc, which in turn encourages others to purchase this sort of device.

UPDATE - FEB 2018: The Broadlink proprietary encrypted communication protocol has been cracked! OpenHAB + Broadlink = viable!

Friday, 28 October 2016

Configuring Jacoco4sbt for a Play application

One such plugin is Jacoco4sbt, which wires the JaCoCo code-coverage tool into SBT (the build system for Play apps). Configuration is pretty straightforward, and the generated HTML report is a nice way to target untested corners of your code. The only downside (which I've finally got around to looking at fixing) was that by default, a lot of framework-generated code is included in your coverage stats.

So without further ado, here's a stanza you can add to your Play app's build.sbt to whittle down your coverage report to code you actually wrote:

jacoco.settings

jacoco.excludes in jacoco.Config := Seq(

"views*",

"*Routes*",

"controllers*routes*",

"controllers*Reverse*",

"controllers*javascript*",

"controller*ref*",

"assets*"

)

I'll be putting this into all my Play projects from now on. Hope it helps someone.

Thursday, 29 September 2016

Push notifications and the endless quest for "rightness"

My attempt to get this working basically extended the existing system which used a count and some Booleans. After countless hours of trying to get this sync right, I came to a realization:

Trying to keep state by passing Booleans back and forth is like flinging paint at a wall and expecting the Mona Lisa to appear.

I had seenLatest, I had hasChanges and even resorted to forceUpdate. There was always a corner-case or timing/sequencing condition that would trip it up. And then I realized that I needed to embrace timing. Each syncable thing has a lastUpdated time and each client has a lastSaw time.

Key for getting this working was remembering to think about this from a user's point of view - they may well be logged in on three devices (aka clients) but once they have seen a notification on any device they don't want to see it again.

The pseudocode for this came down to:

Polling Loop / When a thing changes

userLastSawTimestamp = max(clientLastSawTimestamps)

if (thing.lastUpdated > userLastSawTimestamp) {

showNotification(thing)

}

On User Viewing Thing (i.e. clearing the badge)

clientLastSawTimestamp[clientId] = now

And now it works how it should.

And I see failed attempts to do it correctly everywhere I look ... :-)

Thursday, 18 August 2016

Deep-diving into the Google pb= embedded map format

Let's take a look at a Google "Embed map" URL for a random lat-long. You can obtain one of these by clicking a random point on a Google Map, then clicking the lat-long hyperlink on the popup that appears at the bottom of the page. From there the map sidebar swings out; choose Share -> Embed map - that's your URL.

"https://www.google.com/maps/embed?pb= !1m18!1m12!1m3!1d3152.8774048836685 !2d145.01352231578036 !3d-37.792912740624445!2m3!1f0!2f0!3f0!3m2!1i1024!2i768 !4f13.1!3m3!1m2!1s0x0%3A0x0!2zMzfCsDQ3JzM0LjUiUyAxNDXCsDAwJzU2LjYiRQ !5e0!3m2!1sen!2sau!4v1471218824160"Well, it's not pretty, but with the help of Andrew Whitby's cheat sheet and the comments from others, it turns out we can actually render it as a nested structure knowing that the format [id]m[n] means a structure (multi-field perhaps?) with n children in total - my IDE helped a lot here with indentation:

"https://www.google.com/maps/embed?pb=" +

"!1m18" +

"!1m12" +

"!1m3" +

"!1d3152.8774048836685" +

"!2d145.01352231578036" +

"!3d-37.792912740624445" +

"!2m3" +

"!1f0" +

"!2f0" +

"!3f0" +

"!3m2" +

"!1i1024" +

"!2i768" +

"!4f13.1" +

"!3m3" +

"!1m2" +

"!1s0x0%3A0x0" +

"!2zMzfCsDQ3JzM0LjUiUyAxNDXCsDAwJzU2LjYiRQ" +

"!5e0" +

"!3m2" +

"!1sen" +

"!2sau" +

"!4v1471218824160"

It all (kinda) makes sense! You can see how a decoder could quite easily be able to count ! characters to decide that a bang-group (or could we call it an m-group?) has finished. I'm going to take a stab and say the e represents an enumerated type too - given that !5e0 is "roadmap" (default) mode and !5e1 forces "satellite" mode.

So this is all very well but it doesn't explain why URLs that I generate using the standard method don't actually put the lat-long I selected into the URL - yet they render perfectly! What do I mean? Well, the lat-long that I clicked on (i.e. the marker) for this example is actually:

-37.792916, 145.015722

And yet in the URL it appears (kinda) as:

-37.792912, 145.013522

Which is enough to be slightly, visibly, annoyingly, wrong if you're trying to use it as-is by parsing the URL. What I thought I needed to understand now was this section of the URL:

"!1d3152.8774048836685" +

"!2d145.01352231578036" +

"!3d-37.792912740624445" +

Being the "scale" and centre points of the map. Then I realised - it's quite subtle, but for (one presumes) aesthetic appeal, Google doesn't put the map marker in the dead-centre of the map. So these co-ordinates are just the map centre. The marker itself is defined elsewhere. And there's only one place left. The mysterious z field:

!2zMzfCsDQ3JzM0LjUiUyAxNDXCsDAwJzU2LjYiRQSure enough, substituting the z-field from Mr. Whitby's example completely relocates the map to put the marker in the middle of Iowa. So now; how to decode this? Well, on a hunch I tried base64decode-ing it, and bingo:

% echo MzfCsDQ3JzM0LjUiUyAxNDXCsDAwJzU2LjYiRQ | base64 --decode 37°47'34.5"S 145°00'56.6"ESo there we have it. I can finally parse out the lat-long of the marker when given an embed URL. Hope it helps someone else out there...

Saturday, 30 July 2016

Vultr Jenkins Slave GO!

I selected a "20Gb SSD / 1024Mb" instance located in "Silicon Valley" for my slave. Being on the opposite side of the US to my OpenShift boxes feels like a small, but important factor in preventing total catastrophe in the event of a datacenter outage.

Setup Steps

(All these steps should be performed as root):User and access

Create a jenkins user:addgroup jenkins adduser jenkins --ingroup jenkins

Now grab the id_rsa.pub from your Jenkins master's .ssh directory and put it into /home/jenkins/.ssh/authorized_keys. In the Jenkins UI, set up a new set of credentials corresponding to this, using "use a file from the Jenkins master .ssh". (which by the way, on OpenShift will be located at /var/lib/openshift/{userid}/app-root/data/.ssh/jenkins_id_rsa).

I like to keep things organised, so I made a vultr.com "domain" container and then created the credentials inside.

Install Java

echo "deb http://ppa.launchpad.net/webupd8team/java/ubuntu xenial main" | tee /etc/apt/sources.list.d/webupd8team-java.list echo "deb-src http://ppa.launchpad.net/webupd8team/java/ubuntu xenial main" | tee -a /etc/apt/sources.list.d/webupd8team-java.list apt-key adv --keyserver hkp://keyserver.ubuntu.com:80 --recv-keys EEA14886 apt-get update apt-get install oracle-java8-installer

Install SBT

apt-get install apt-transport-https echo "deb https://dl.bintray.com/sbt/debian /" | tee -a /etc/apt/sources.list.d/sbt.list apt-key adv --keyserver hkp://keyserver.ubuntu.com:80 --recv 642AC823 apt-get update apt-get install sbt

More useful bits

Git

apt-get install git

NodeJS

curl -sL https://deb.nodesource.com/setup_4.x | bash - apt-get install nodejs

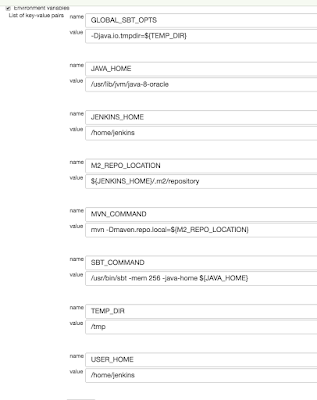

This machine is quite dramatically faster and has twice the RAM of my usual OpenShift nodes, making it extra-important to have those differences defined per-node instead of hard-coded into a job. One thing I was surprised to have to define was a memory limit for the JVM (via SBT's -mem argument) as I was getting "There is insufficient memory for the Java Runtime Environment to continue" errors when letting it choose its own upper limit. For posterity, here are the environment variables I have configured for my Vultr slave:

Wednesday, 29 June 2016

ReactJS - Early Thoughts

I have always been comfortable with HTML, and first applied a CSS rule (inline) waaay back in the Netscape 3.0 days:

<a href="..." style="text-decoration: none;">Link</a>I'm pretty sure there were HTML frames involved there too. Good times. Anyway, the Javascript world had always been a little too wild-and-crazy for me to get deeply into; browser support sucked, the "standard library" kinda sucked, and people had holy wars about how to structure even the simplest code.

Up to about 2015, I'd been happy enough with the basic tools - JQuery for DOM-smashing and AJAX, Underscore/Lodash for collection-manipulation, and bringing in Bootstrap's JS library for a little extra polish. The whole thing based on full HTML being rendered from a traditional multi-tiered server, ideally written in Scala. I got a lot done this way.

I had a couple of brushes with Angular (1.x) along the way and didn't really see the point; the Angular code was always still layered on top of "perfectly-good" HTML from the server. It was certainly better-structured than the usual JQuery mess but the hundreds of extra kilobytes to be downloaded just didn't seem to justify themselves.

Now this year, I've been working with a Real™ Front End project - that is, one that stands alone and consumes JSON from a back-end. This is using Webpack, Babel, ES6, ReactJS and Redux as its principal technologies. After 6 weeks of working on this, here are some of my first thoughts:

- Good ES6 goes a long way to making Javascript feel grown-up

- Bad The whole Webpack-Babel-bundling thing feels really rough - so much configuration, so hard to follow

- Good React-Hot-Reloading is super, super-nice. Automatic browser reloads that keep state are truly magic

- Bad You can still completely, silently toast a React app with a misplaced comma ...

- Good ... but ESLint will probably tell you where you messed up

- Bad It's still Javascript, I miss strong typing ...

- Good ... but React PropTypes can partly help to ensure arguments are there and (roughly) the right type

- Good Redux is a really neat way of compartmentalising state and state transitions - super-important in front-ends

- Good The React Way of flowing props down through components really helps with code structure

So yep, there were more Goods than Bads - I'm liking React, and I'm finally feeling like large apps can be built with JavaScript, get that complete separation between back- and front-ends, and be maintainable and practical for multiple people to work on concurrently. Stay tuned for more!

Thursday, 12 May 2016

Cloudy Continuous Integration Part 2 - Trigger-Happy

Why do otherwise-excellent and smart engineers end up doing the kind of dumb polling in Jenkins that would keep them up at night if it was their code? Mainly because historically, it's been substantially harder to get properly-triggered job execution going in Jenkins. But things are getting better. The Jenkins GitHub Plugin does a terrific job of simplifying triggering, thanks to its convention-over-configuration approach - once you've nominated where your GitHub repo is, getting triggering is as simple as checking a box. Lovely.

Now, finally, it seems BitBucket have almost caught up in this regard. Naturally, as they offer (free) private repositories, there is a little bit more configuration required on the SCM side, but I can confirm that in May 2016, it works. There seem to have been a lot of changes going on under the hood at BitBucket, and the reliability of their triggering has suffered from week-to-week at times, but hopefully things will be solid now.

The 2016 Way to trigger Jenkins from BitBucket

- Firstly, there is now no need to configure a special user for triggering purposes

- Install the Jenkins BitBucket plugin. For your reference, I have 1.1.5

- In jobs that you want to be triggered, note there is a new "BitBucket" option under Build Triggers. You want this. If it was checked, uncheck the "polling" option and feel clean

- That's the Jenkins side done. Now flip to your BitBucket repo, and head to the Settings

- Under Integrations -> Webhooks add a new one, and fill it out something like this: Where jjj.rhcloud.com is your (in this case imaginary-OpenShift) Jenkins URL.

- Make sure you've included that trailing slash, and then you're done! Push some code to test

Hat-Tips to the following (but sadly outdated) bloggers

Wednesday, 27 April 2016

Happy and Healthy Heterogeneous Build Slaves in Jenkins

So a second slave was brought on line - my old Dell Inspiron 9300 laptop from 2006 - which (after an upgrade to 2Gb of RAM for a handful of dollars online) has done a sterling job. Running Ubuntu 14.04 Desktop edition seems to not tax the Intel Pentium M too badly, and it seemed crazy to get rid of that amazing 17" 1920x1200 screen for a pittance on eBay. Now at this point I had two slaves on line, with highly different capabilities.

Horses for Courses

The OpenShift node (slave1) has low RAM, slow CPU, very limited persistent storage but exceptionally quick network access (being located in a datacenter somewhere on the US East Coast), while the laptop (slave2) has a reasonable amount of RAM, moderate CPU, tons of disk but relatively slow transfer rates to the outside world, via ADSL2 down here in Australia. How to deal with all these differences when running jobs that could be farmed out to either node?The solution is of course the classic layer of indirection that allows the different boxes to be addressed consistently. Here is the configuration for my slave1 Redhat box on OpenShift:

Note the -mem argument in the SBT_COMMAND which sets the -Xmx and -Xms to this number and PermGen to 2* this number, keeping a lid on resource usage. And here's slave2, the Ubuntu laptop, with no such restriction needed:

And here's what a typical build job looks like:

Caring for Special-needs Nodes

Finally, my disk-challenged slave1 node gets a couple of Jenkins jobs to tend to it. The first periodically runs a git gc in each .git directory under the Jenkins workspace (as per a Stack Overflow answer) - it runs quota before-and-after to show how much (if anything) was cleared up:The second job periodically removes the target directory wherever it is found - SBT builds leave a lot of stuff in here that can really add up. Here's what it looks like:

Wednesday, 16 March 2016

Building reusable, type-safe Twirl components

Here's a simple example. I'm using Bootstrap (of course), and I'm using the table-striped class to add a little bit of interest to tabular data. The setup of an HTML table is quite verbose and definitely doesn't need to be repeated, so I started with the following basic structure:

@(items:Seq[_], headings:Seq[String] = Nil)

<table class="table table-striped">

@if(headings.nonEmpty) {

<thead>

<tr>

@for(heading <- headings) {

<th>@heading</th>

}

</tr>

</thead>

}

<tbody>

@for(item <- items) {

<tr>

???

</tr>

}

}

</tbody>

</table>

Which neatens up the call-site from 20-odd lines to one:

@stripedtable(userList, Seq("Name", "Age")

Except. How do I render each row in the table body? That differs for every use case!

What I really wanted was to be able to map over each of the items, applying some client-provided function to render a load of <td>...</td> cells for each one. Basically, I wanted stripedtable to have this signature:

@(items:Seq[T], headings:Seq[String] = Nil)(fn: T => Html)With the body simply being:

@for(item <- items) {

<tr>

@fn(item)

</tr>

}

and client code looking like this:

@stripedtable(userList, Seq("Name", "Age") { user:User =>

<td>@user.name</td><td>@user.age</td>

}

...aaaaand we have a big problem. At least at time of writing, Twirl templates cannot be given type arguments. So those [T]'s just won't work. Loosening off the types like this:

@(items:Seq[_], headings:Seq[String] = Nil)(fn: Any => Html)will compile, but the call-site won't work because the compiler has no idea that the _ and the Any are referring to the same type. Workaround solutions? There are two, depending on how explosively you want type mismatches to fail:

Option 1: Supply a case as the row renderer

@stripedtable(userList, Seq("Name", "Age") { case user:User =>

<td>@user.name</td><td>@user.age</td>

}

This works fine, as long as every item in userList is in fact a User - if not, you get a big fat MatchError.

Option 2: Supply a case as the row renderer, and accept a PartialFunction

The template signature becomes:@(items:Seq[_],hdgs:Seq[String] = Nil)(f: PartialFunction[Any, Html])and we tweak the body slightly:

@for(item <- items) {

@if(fn.isDefinedAt(item)) {

<tr>

@fn(item)

</tr>

}

}

In this scenario, we've protected ourselves against type mismatches, and simply skip anything that's not what we expect. Either way, I can't currently conceive of a more succinct, reusable, and obvious way to drop a consistently-built, styled table into a page than this:

@stripedtable(userList, Seq("Name", "Age") { case user:User =>

<td>@user.name</td><td>@user.age</td>

}

Friday, 4 March 2016

Unbreaking the Heroku Jenkins Plugin

CI Indistinguishable From Magic

I'm extremely happy with my OpenShift-based Jenkins CI setup that deploys to Heroku. It really does do the business, and the price simply cannot be beaten.

Know Thy Release

Too many times, at too many workplaces, I have faced the problem of trying to determine Is this the latest code? from "the front end". Determined not to have this problem in my own apps, I've been employing a couple of tricks for a few years now that give excellent traceability.

Firstly, I use the nifty sbt-buildinfo plugin that allows build-time values to be injected into source code. A perfect match for Jenkins builds, it creates a Scala object that can then be accessed as if it contained hard-coded values. Here's what I put in my build.sbt:

buildInfoSettings

sourceGenerators in Compile <+= buildInfo

buildInfoKeys := Seq[BuildInfoKey](name, version, scalaVersion, sbtVersion)

// Injected via Jenkins - these props are set at build time:

buildInfoKeys ++= Seq[BuildInfoKey](

"extraInfo" -> scala.util.Properties.envOrElse("EXTRA_INFO", "N/A"),

"builtBy" -> scala.util.Properties.envOrElse("NODE_NAME", "N/A"),

"builtAt" -> new java.util.Date().toString)

buildInfoPackage := "com.themillhousegroup.myproject.utils"

The Jenkins Wiki has a really useful list of available properties which you can plunder to your heart's content. It's definitely well worth creating a health or build-info page that exposes these.

Adding Value with the Heroku Jenkins Plugin

Although Heroku works spectacularly well with a simple git push, the Heroku Jenkins Plugin adds a couple of extra tricks that are very worthwhile, such as being able to place your app into/out-of "maintenance mode" - but the most pertinent here is the Heroku: Set Configuration build step. Adding this step to your build allows you to set any number of environment variables in the Heroku App that you are about to push to. You can imagine how useful this is when combined with the sbt-buildinfo plugin described above!

Here's what it looks like for one of my projects, where the built Play project is pushed to a test environment on Heroku:

Notice how I set HEROKU_ENV, which I then use in my app to determine whether key features (for example, Google Analytics) are enabled or not.Here are a couple of helper classes that I've used repeatedly (ooh! time for a new library!) in my Heroku projects for this purpose:

import scala.util.Properties

object EnvNames {

val DEV = "dev"

val TEST = "test"

val PROD = "prod"

val STAGE = "stage"

}

object HerokuApp {

lazy val herokuEnv = Properties.envOrElse("HEROKU_ENV", EnvNames.DEV)

lazy val isProd = (EnvNames.PROD == herokuEnv)

lazy val isStage = (EnvNames.STAGE == herokuEnv)

lazy val isDev = (EnvNames.DEV == herokuEnv)

def ifProd[T](prod:T):Option[T] = if (isProd) Some(prod) else None

def ifProdElse[T](prod:T, nonProd:T):T = {

if (isProd) prod else nonProd

}

}

... And then it all went pear-shaped

I had quite a number of Play 2.x apps using this Jenkins+Heroku+BuildInfo arrangement to great success. But then at some point (around September 2015 as far as I can tell) the Heroku Jenkins Plugin started throwing an exception while trying to Set Configuration. For the benefit of any desperate Google-trawlers, it looks like this:

at com.heroku.api.parser.Json.parse(Json.java:73) at com.heroku.api.request.releases.ListReleases.getResponse(ListReleases.java:63) at com.heroku.api.request.releases.ListReleases.getResponse(ListReleases.java:22) at com.heroku.api.connection.JerseyClientAsyncConnection$1.handleResponse(JerseyClientAsyncConnection.java:79) at com.heroku.api.connection.JerseyClientAsyncConnection$1.get(JerseyClientAsyncConnection.java:71) at com.heroku.api.connection.JerseyClientAsyncConnection.execute(JerseyClientAsyncConnection.java:87) at com.heroku.api.HerokuAPI.listReleases(HerokuAPI.java:296) at com.heroku.ConfigAdd.perform(ConfigAdd.java:55) at com.heroku.AbstractHerokuBuildStep.perform(AbstractHerokuBuildStep.java:114) at com.heroku.ConfigAdd.perform(ConfigAdd.java:22) at hudson.tasks.BuildStepMonitor$1.perform(BuildStepMonitor.java:20) at hudson.model.AbstractBuild$AbstractBuildExecution.perform(AbstractBuild.java:761) at hudson.model.Build$BuildExecution.build(Build.java:203) at hudson.model.Build$BuildExecution.doRun(Build.java:160) at hudson.model.AbstractBuild$AbstractBuildExecution.run(AbstractBuild.java:536) at hudson.model.Run.execute(Run.java:1741) at hudson.model.FreeStyleBuild.run(FreeStyleBuild.java:43) at hudson.model.ResourceController.execute(ResourceController.java:98) at hudson.model.Executor.run(Executor.java:374) Caused by: com.heroku.api.exception.ParseException: Unable to parse data. at com.heroku.api.parser.JerseyClientJsonParser.parse(JerseyClientJsonParser.java:24) at com.heroku.api.parser.Json.parse(Json.java:70) ... 18 more Caused by: org.codehaus.jackson.map.JsonMappingException: Can not deserialize instance of java.lang.String out of START_OBJECT token at [Source: [B@176e40b; line: 1, column: 473] (through reference chain: com.heroku.api.Release["pstable"]) at org.codehaus.jackson.map.JsonMappingException.from(JsonMappingException.java:160) at org.codehaus.jackson.map.deser.StdDeserializationContext.mappingException(StdDeserializationContext.java:198) at org.codehaus.jackson.map.deser.StdDeserializer$StringDeserializer.deserialize(StdDeserializer.java:656) at org.codehaus.jackson.map.deser.StdDeserializer$StringDeserializer.deserialize(StdDeserializer.java:625) at org.codehaus.jackson.map.deser.MapDeserializer._readAndBind(MapDeserializer.java:235) at org.codehaus.jackson.map.deser.MapDeserializer.deserialize(MapDeserializer.java:165) at org.codehaus.jackson.map.deser.MapDeserializer.deserialize(MapDeserializer.java:25) at org.codehaus.jackson.map.deser.SettableBeanProperty.deserialize(SettableBeanProperty.java:230) at org.codehaus.jackson.map.deser.SettableBeanProperty$MethodProperty.deserializeAndSet(SettableBeanProperty.java:334) at org.codehaus.jackson.map.deser.BeanDeserializer.deserializeFromObject(BeanDeserializer.java:495) at org.codehaus.jackson.map.deser.BeanDeserializer.deserialize(BeanDeserializer.java:351) at org.codehaus.jackson.map.deser.CollectionDeserializer.deserialize(CollectionDeserializer.java:116) at org.codehaus.jackson.map.deser.CollectionDeserializer.deserialize(CollectionDeserializer.java:93) at org.codehaus.jackson.map.deser.CollectionDeserializer.deserialize(CollectionDeserializer.java:25) at org.codehaus.jackson.map.ObjectMapper._readMapAndClose(ObjectMapper.java:2131) at org.codehaus.jackson.map.ObjectMapper.readValue(ObjectMapper.java:1481) at com.heroku.api.parser.JerseyClientJsonParser.parse(JerseyClientJsonParser.java:22) ... 19 more Build step 'Heroku: Set Configuration' marked build as failureEffectively, it looks like Heroku has changed the structure of their pstable object, and that the baked-into-a-JAR definition of it (Map<String, String> in Java) will no longer work.

Open-Source to the rescue

Although the Java APIs for Heroku have been untouched since 2012, and indeed the Jenkins Plugin itself was announced deprecated (without a suggested replacement) only a week ago, fortunately the whole shebang is open-source on Github so I took it upon myself to download the code and fix this thing. A lot of swearing, further downloading of increasingly-obscure Heroku libraries and general hacking later, and not only is the bug fixed:- Map<String, String> pstable; + Map<String, Object> pstable;But there are new tests to prove it, and a new Heroku Jenkins Plugin available here now. Grab this binary, and go to Manage Jenkins -> Manage Plugins -> Advanced -> Upload Plugin and drop it in. Reboot Jenkins, and you're all set.

Friday, 26 February 2016

Making better software with Github

This solved my problem, in that I no longer ran out of PermGen on my build slave. But the repercussions were far-reaching. Any decent public-facing library needs documentation, and Github's README.md is an incredibly convenient place to put it all. I've lost count of the number of times I've found myself reading my own documentation up there on Github; if Arallon was still a hodge-podge of classes within my application, I'd have spent hours trying to deduce my own functionality ...

Of course, a decent open-source library must also have excellent tests and test coverage. Splitting Arallon into its own library gave the tests a new-found focus and similarly the test coverage (measured with JaCoCo) was much more significant.

Since that first library split, I've peeled off many other utility libraries from private projects; almost always things to make Play2 app development a little quicker and/or easier:

- play2-reactivemongo-mocks - Mocking out a ReactiveMongo persistence layer

- play2-mailgun - Easily send email via MailGun's API

- pac4j-underarmour - Integrates UnderArmour (aka MapMyRun) into the pac4j authentication framework

- mondrian - A super-simple CRUD layer for Play + ReactiveMongo

As a shameless plug, I use yet another of my own projects (I love my own dogfood!), sbt-skeleton to set up a brand new SBT project with tons of useful defaults like dependencies, repository locations, plugins etc as well as a skeleton directory structure. This helps make the decision to extract a library a no-brainer; I can have a library up-and-building, from scratch, in minutes. This includes having it build and publish to BinTray, which is simply just a matter of cloning an existing Jenkins job and changing the name of the source Github repo.

I've found the implied peer-pressure of having code "out there" for public scrutiny has a strong positive effect on my overall software quality. I'm sure I'm not the only one. I highly recommend going through the process of extracting something re-usable from private code and open-sourcing it into a library you are prepared to stand behind. It will make you a better software developer in many ways.

* This is not a criticism of OpenShift; I love them and would gladly pay them money if they would only take my puny Australian dollars :-(

Monday, 11 January 2016

*Facepalm* 2016

Setup for Failure

The key integration point between your existing user-access code and pac4j's form handling is your implementation of the UsernamePasswordAuthenticator interface which is where credentials coming from the input form get checked over and the go/no-go decision is made. Here's what it looks like:

public interface UsernamePasswordAuthenticator

extends Authenticator<UsernamePasswordCredentials> {

/**

* Validate the credentials.

* It should throw a CredentialsException in case of failure.

*

* @param credentials the given credentials.

*/

@Override

void validate(UsernamePasswordCredentials credentials);

}

An apparently super-simple interface, but slightly lacking in documentation, this little method cost me over a day of futzing around debugging, followed by a monstrous facepalm.

Side-effects for the lose

The reasons for this method being void are not apparent, but such things are not as generally frowned-upon in the Java world as they are in Scala-land. Here's what a basic working implementation (that just checks that the username is the same as the password) looks like as-is in Scala:

object MyUsernamePasswordAuthenticator

extends UsernamePasswordAuthenticator {

val badCredsException =

new BadCredentialsException("Incorrect username/password")

def validate(credentials: UsernamePasswordCredentials):Unit = {

if (credentials.getUsername == credentials.getPassword) {

credentials.setUserProfile(new EmailProfile(u.emailAddress))

} else {

throw badCredsException

}

}

}

So straight away we see that on the happy path, there's an undocumented incredibly-important side-effect that is needed for the whole login flow to work - the Authenticator must mutate the incoming credentials, populating them with a profile that can then be used to load a full user object. Whoa. That's three pretty-big no-nos just in the description! The only way I found out about this mutation path was by studying some test/throwaway code that also ships with the project.

Not great. I think a better Scala implementation might look more like this:

object MyUsernamePasswordAuthenticator

extends ScalaUsernamePasswordAuthenticator[EmailProfile] {

val badCredsException =

new BadCredentialsException("Incorrect username/password")

/** Return a Success containing an instance of EmailProfile if

* successful, otherwise a Failure around an appropriate

* Exception if invalid credentials were provided

*/

def validate(credentials: UsernamePasswordCredentials):Try[EmailProfile] = {

if (credentials.getUsername == credentials.getPassword) {

Success(new EmailProfile(u.emailAddress))

} else {

Failure(badCredsException)

}

}

}

We've added strong typing with a self-documenting return-type, and lost the object mutation side-effect. If I'd been coding to that interface, I wouldn't have needed to go spelunking through test code.

But this wasn't my facepalm.

Race to the bottom

Of course my real Authenticator instance is going to need to hit the database to verify the credentials. As a longtime Play Reactive-Mongo fan, I have a nice little asynchronous service layer to do that. My UserService offers the following method:

class UserService extends MongoService[User]("users") {

...

def findByEmailAddress(emailAddress:String):Future[Option[User]] = {

...

}

I've left out quite a lot of details, but you can probably imagine that plenty of boilerplate can be stuffed into the strongly-typed MongoService superclass (as well as providing the basic CRUD operations) and subclasses can just add handy extra methods appropriate to their domain object.

The signature of the findByEmailAddress method encapsulates the fact that the query both a) takes time and b) might not find anything. So let's see how I employed it:

def validate(credentials: UsernamePasswordCredentials):Unit = {

userService.findByEmailAddress(credentials.getUsername).map { maybeUser =>

maybeUser.fold(throw badCredsException) { u =>

if (!User.isValidPassword(u, credentials.getPassword)) {

logger.warn(s"Password for ${u.displayName} did not match!")

throw badCredsException

} else {

logger.info(s"Credentials for ${u.displayName} OK!")

credentials.setUserProfile(new EmailProfile(u.emailAddress))

}

}

}

}

It all looks reasonable right? Failure to find the user means an instant fail; finding the user but not matching the (BCrypted) passwords also results in an exception being thrown. Otherwise, we perform the necessary mutation and get out.

So here's what happened at runtime:

- A valid username/password combo would appear to get accepted (log entries etc) but not actually be logged in

- Invalid combos would be logged as such but the browser would not redisplay the login form with errors

Have you spotted the problem yet?

The signature of findByEmailAddress is Future[Option[User]] - but I've completely forgotten the Future part (probably because most of the time I'm writing code in Play controllers where returning a Future is actually encouraged). The signature of the surrounding method, being Unit, means Scala won't bother type-checking anything. So my method ends up returning nothing almost-instantaneously, which makes pac4j think everything is good. Then it tries to use the UserProfile of the passed-in object to actually load the user in question, but of course the mutation code hasn't run yet so it's null- we're almost-certainly still waiting for the result to come back from Mongo!

**Facepalm**

An Await.ready() around the whole lot fixed this one for me. But I think I might need to offer a refactor to the pac4j team ;-)