Saturday, 30 December 2023

2023 End-of-year wrapup

Sunday, 26 November 2023

Mermaid.js is incredibly cool

Monday, 23 October 2023

Markdown (and Mermaid) on Blogger in 2023

Saturday, 30 September 2023

Frenzy.js - the saga of flood-fill

It may surprise you to know that yes, I am back working on Frenzy.js after a multi-year hiatus. It's a bit surreal working on an "old-skool" (pre-hooks) React app but the end is actually in sight. As of September 2023 I have (by my reckoning) about 80% of the game done;

- Basic geometry (it's all scaled 2x)

- Levels with increasing numbers of Leptons with increasing speed

- Reliable collision detection

- High-score table that persists to a cookie

- Mostly-reliable calculation of the area to be filled

- Accurate emulation of the game state, particularly when the game "pauses"

The big-ticket items I still need to complete are:

- Implement "chasers" on higher levels

- Fine-tune the filled-area calculation (it still gets it wrong sometimes)

- Animated flood-fill

- Player-start, player-death and Lepton-death animations

- (unsure) Sound.

It all comes flooding back

To remind you (if you were an 80s Acorn kid) or (far more likely) educate you on what I mean by "flood-fill", here's Frenzy doing its thing in an emulator; I've just completed drawing the long vertical line, and now the game is flood-filling the smaller area:

I wanted to replicate this distinctive style of flood-fill exactly in my browser-based version, and it's been quite the labour of love. My first attempt (that actually worked there were many iterations that did not) was so comically slow that I almost gave up on the whole idea. Then I took a concrete pill and decided that if I couldn't get a multiple-GHz multi-cored MONSTER of a machine to replicate a single-cored 2MHz (optimistically) 8-bit grot-box from the early 1980s, I may as well just give up...

The basic concept for this is:

Given a polygonal area A that needs to be flood-filled; Determine bottom-rightmost inner point P within A. The "frontier pixels" is now the array [P] On each game update "tick": Expand each of the "frontier pixels" to the N,S,E and W; but Discard an expansion if it hits a boundary of A Also discard it if the pixel has already been filled The new "frontier pixels" is all the undiscarded pixels Stop when the "frontier pixels" array is emptyI got this to be pretty efficient using a bit-field "sparse array" to quickly check for already-filled pixels. In the browser, I could perform the per-tick operations in less than 0.1 milliseconds for any size of A. Not too surprising given the entire game area is only 240x180 pixels, and the maximum possible polygonal area could only ever be half that big: 21,600 pixels.

The problem now became efficiently shifting the big pile'o'filled-pixels from the algorithm onto the HTML5 canvas that is the main gameplay area. I'm using the excellent React Konva library as a nice abstraction over the canvas, but the principal problem is that a canvas doesn't expose per-pixel operations in its API, and nor does Konva. The Konva team has done an admirable job making their code as performant as possible, but my first cut (instantiating a pile of tiny 1x1 Rects on each tick) simply couldn't cope once the number of pixels got significant:

This has led me down a quite-interesting rabbit-hole at the intersection of HTML5 Canvas, React, React-Konva, and general "performance" stuff which is familiar-yet-different. There's an interesting benchmark set up for this, and the results are all over the shop depending on browser and platform. Mobile results are predictably terrible but I'm deliberately not targeting them. This was a game for a "desktop" (before we called them that) and it needs keyboard input. I contemplated some kind of gestural control but it's just not good enough I think, so I'd rather omit it.

What I need to do, is find a way to automagically go from a big dumb pile of individual filled pixels into a suitable collection of optimally-shaped polygons, implemented as Konva Lines.

The baseline

In code, what I first naïvely had was:

type Point = [number, number]

// get the latest flood fill result as array of points

const filledPixels:Array<Point> = toPointArray(sparseMap);

// Simplified a little - we use some extra options

// on Rect for performance...

return filledPixels.map((fp) =>

<Rect x={fp[0]}

y={fp[1]}

width={1}

height={1}

lineCap="square"

fillColor="red"

/>

);

With the above code, the worst-case render time while filling the worst-case shape (a box 120x180px) was 123ms. Unacceptable.

What I want is:

// Konva just wants a flat list of x1,y1,x2,y2,x3,y3

type Poly = Array<number>;

// get the latest flood fill result as array of polys

const polys:Array<number> = toOptimalPolyArray(sparseMap);

// far-fewer, much-larger polygons

return polys.map((poly) =>

<Line points={poly}

lineCap="square"

fillColor="red"

closed

/>

);

So how the hell do I write toOptimalPolyArray()?

Optimisation step 1: RLE FTW

My Googling for "pixel-to-polygon" and "pixel vectorisation" failed me, so I just went from first principles and tried a Run-Length-Encoding on each line of the area to be filled. As a first cut, this should dramatically reduce the number of Konva objects required. Here's the worst-case render time while filling the worst-case shape (a box 120x180px): 4.4ms

Optimisation step 2: Boxy, but good

I'd consider this to be a kind of half-vectorisation. Each row of the area is optimally vectorised into a line with a start and end point. The next step would be to iterate over the lines, and simply merge lines that are "stacked" directly on top of each other. Given the nature of the shapes being filled is typically highly rectilinear, this felt like it would "win" quite often. Worst-case render time now became: 1.9ms

Optimisation step 3: Know your enemy

I felt there was still one more optimisation possible, and that is to exploit the fact that the game always picks the bottom-right-hand corner in which to start filling. Thus there is a very heavy bias towards the fill at any instant looking something like this:

---------------- | | | | | P| | LL| | LLL| | LLLL| | LLLLL| | LLLLLL| | LLLLLLL| | LLLLLLLL| | LLLLLLLLL| | LLLLLLLLLL| | LLLLLLLLLLL| | LLLLLLLLLLLL| | LLLLLLLLLLLLL| |SSSSSSSSSSSSSS| |SSSSSSSSSSSSSS| |SSSSSSSSSSSSSS| ----------------where

- P is an unoptimised pixel

- L is a part line, that can be fairly efficiently represented by my "half-vectorisation", and

- S is an optimal block from the "stacked vectorisation" approach

Sunday, 27 August 2023

Searching for the next SPA

I've been quite taken with a particular style of casual, "clever" game that rose to prominence during The COVID Years but still has a charm that keeps me visiting almost daily:

- Wordle (the original, and the "rags to riches" ideal)

- Quordle (a beautiful initial implementation, albeit reduced now)

- Heardle (recently escaped from the clutches of Spotify)

- Connections at the New York Times

There are a heap of common factors amongst these games (and I'll optimistically include my own Cardle here too) that I think make feel so "nice":

- Rejection of obvious monetization strategies

- Feel resolutely mobile-first in UI/UX (large elements, zero scrolling!)

- Delightful levels of polish (micro-interactions, animation etc)

- Focused; not just a Single *Page* App, but almost a Single *Pane* App

Of course there's also the little matter of having a great idea with suitably nice mechanics and frequently creativity (Connections I think excels in having creative, challenging content by Wyna Liu that is pitched just *chef's kiss*) but I think there are still areas of the word-association, letter-oriented game landscape to be explored.

For this next one I also will be trying out Svelte after reading the superb "Things you forgot (or never knew) because of React" by Josh Collinsworth which very nicely articulated what feels a little "ick" about React development these days, and paints a very nice picture on what's on the other side of the fence. The scoped-styling and in-built animation abilities in particular seem like a perfect fit for this kind of app.

Now I just need an idea...

Sunday, 30 July 2023

Can you handle the truth?

JavaScript/ECMAScript/TypeScript are officially everywhere these days and with them comes the idiomatic use of truthiness checking.

At work, recently I had to fix a nasty bug where the truthiness of an optional value was used to determine what "mode" to be in, instead of a perfectly-good enumerated type located nearby. Let me extrapolate this into a worked example that might show how dangerous this is:

type VehicleParameters = {

roadSpeed: number;

engineRPM: number;

...

cruiseControlOn: boolean;

cruiseControlSpeed: number | undefined;

}

and imagine, running a few times a second, we had a function:

function maintainCruiseSpeed(vp: VehicleParameters) {

const { roadSpeed, cruiseControlSpeed } = vp;

if (cruiseControlSpeed ?? cruiseControlSpeed < roadSpeed) {

accelerate();

}

}

Let's suppose the driver of this vehicle hits "SET" on their cruise control stalk to lock in their current speed of 100km/h as their desired automatically-maintained speed. The control module sets the cruiseControlOn boolean to true, and copies the current value of roadSpeed (being 100) into cruiseControlSpeed

Now imagine the driver disengages cruise control, and the boolean is correctly set to false, but the cruiseControlSpeed is retained, as it is very common for a cruise system to have a RESUME feature that goes back to the previously-stored speed.

And all of a sudden we have an Unintended Acceleration situation. Yikes.

As simple as can be, but no simpler

Don't get me wrong, I like terse code; one of the reasons I liked Scala so much was the succinctness after escaping from the famously long-winded Kingdom of Nouns. I also loathe redundant and/or underperforming fields, in particular Booleans that shadow another bit of state, e.g.:

const [isLoggedIn] = useState(false); const [loggedInUser] = useState(undefined);

That kind of stuff drives me insane. What I definitely really like is when we can be Javascript-idiomatic AND use the power of TypeScript to prevent combinations of things that should not be. How?

Typescript Unions have entered the chat

Let's define some types that model the behaviour we want:

- When cruise is turned on we need target speed, there's no resume speed

- When cruise is turned off we zero the target speed, and the resume speed

- When cruise is set to coast (or the brake pedal is pressed) we zero the target speed, but store a resume speed

- When cruise is turned on we need a target speed to get back to, and there's no resume speed

type VehicleParameters = {

roadSpeed: number;

engineRPM: number;

cruiseControlSettings: CruiseControlSettings;

}

type CruiseControlSettings =

CruiseOnSettings |

CruiseOffSettings |

CruiseCoastSettings |

CruiseResumeSettings

type CruiseOnSettings = {

mode: CruiseMode.CruiseOn

targetSpeedKmh: number;

resumeSpeedKmh: 0;

}

type CruiseOffSettings = {

mode: CruiseMode.CruiseOff

targetSpeedKmh: 0;

resumeSpeedKmh: 0;

}

type CruiseCoastSettings = {

mode: CruiseMode.CruiseCoast

targetSpeedKmh: 0;

resumeSpeedKmh: number;

}

type CruiseResumeSettings = {

mode: CruiseMode.CruiseResume

targetSpeedKmh: number;

resumeSpeedKmh: 0;

}

Let's also write a new version of maintainCruiseSpeed, still in idiomatic ECMAScript (i.e. using truthiness):

function maintainCruiseSpeed(vp: VehicleParameters) {

const { roadSpeed, cruiseControlSettings } = vp;

if (cruiseControlSettings.targetSpeedKmh < roadSpeed) {

accelerate();

}

}

And finally, let's try and update the cruise settings to an illegal combination:

function illegallyUpdateCruiseSettings():CruiseControlSettings {

return {

mode: CruiseMode.CruiseOff,

targetSpeedKmh: 120,

resumeSpeedKmh: 99,

}

}

... but notice now, you can't; you get a TypeScript error:

Type

'{ mode: CruiseMode.CruiseOff; targetSpeedKmh: 120;

resumeSpeedKmh: number; }'

is not assignable to type 'CruiseControlSettings'.

Types of property 'targetSpeedKmh' are incompatible.

Type '120' is not assignable to type '0'

I'm not suggesting that TypeScript types will unequivocally save your critical code from endangering human life, but a little thought expended on sensibly modelling conditions just might help.

Sunday, 25 June 2023

In praise of ETL, part three: Love-you-Load-time

Finishing off my three-part series as a newly-minted ETL fanboi, we get to the Load stage. One could be forgiven thinking there is not a great deal to get excited about at this point; throw data at the new system and it's Job Done. But as usual with ETL, there are hidden pleasures lurking that you might not have considered until you've got your feet wet.

Mechanical Sympathy

In the "customer migration" ETL project I worked on, the output of the Transform stage for a given customer was dumped into a JSON file in an AWS S3 bucket. At Load time, the content of a bucket was scooped out and fed into the target system. Something we noticed quite quickly as the migration project ramped up, was that the new system did not "like" being hit with too many "create new customer" API calls per second. It was pretty simple to implement a rate limit system in the Load stage (only!) to ensure we were being mechanically-sympathetic to the new system, while still being able to go as fast as possible in the other stages.

Optimal Throughput

Indeed, we had a similar rate-limit in our Extract for the benefit of our source system(s) - albeit at a higher rate as its API seemed to be able handle reading a fair bit faster than the new system's API could write. And there's another benefit - we weren't being throttled by the speed of the slower system; we could still extract as fast as the source would allow, transform and buffer into S3, then load at the optimal speed for the new system. You could get fancy and call it Elastic Scaling or somesuch, but really, if we'd used some monolithic process to try and do these customer migrations, we wouldn't have had the fine-grained control.

Idempotency is Imperative

One last tip; strive to ensure your Load stage does not alter the output of the Transform in any way, or you'll lose one of the key advantages of the whole ETL architecture. If you can't look at a transform file (e.g. a JSON blob in an S3 bucket in our case) and know that it's exactly what was sent to the target system, then your debugging just got a whole lot harder. Even something as innocent as populating a createdAt field with new Date() could well bite you (for example if the date has to be in a particular format). If you've got to do something like that, consider passing the date in, in the correct format, as an additional parameter to the Load stage, so there's at least some evidence of what the field was actually set to. There's really nothing worse than not being able to say with confidence what you actually sent to the target system.

We didn't do this, but if there was a "next time" I'd also store a copy of this payload in an S3 bucket as well, just for quick verification purposes.

Saturday, 6 May 2023

In praise of ETL, part two; Trouble-free Transforms

Continuing with my series about the unexpected pleasures of ETL, we get to the Transform stage.

I've already mentioned in the previous post the huge benefit of separating the extraction and transformation stages, giving an almost-limitless source of test fixtures for transform unit tests. On the subject of testing, it strikes me that there's a parallel in ETL with the classic "cost of failure" model that is typically used to justify adoption of unit tests to dinosaur-era organisations that don't use them; viz:

(Graph from DeepSource.com)My contention is that failure in each E/T/L stage has a similar cost profile (of course YMMV, but it applied in our customer-migration scenario);

An error during Extract

- (Assuming an absolute minimum of logic exists in the extraction code)

- Most likely due to a source system being overloaded/overwhelmed by sheer number of extraction requests occurring at once

- Throttle them, and retry the process

- Easy and cheap to restart

- Overall INEXPENSIVE

An error during Transform

- Easy to reproduce via unit tests/fixtures

- Rebuild, redeploy, re-run

- Overall MEDIUM EXPENSE

An error during Load

- Most likely in "somebody else's code"

- Investigation/rectification may require cross-functional/cross-team/cross-company communications

- Re-run for this scenario may be blocked if target system needs to be cleared down

- Overall HIGH EXPENSE

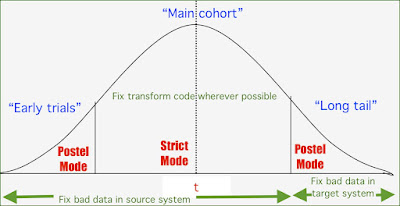

Thus it behooves us (such a great vintage phrase that!) to get our transforms nice and tight; heavy unit-testing is an obvious solution here but also careful consideration of what the approach to transforming questionable data should be. In our case, our initial transform attempts took the pragmatic, Postel-esque "accept garbage, don't throw an error, return something sensible" approach. So upon encountering invalid data for example, we'd log a warning, and transform it to an undefined object or empty array as appropriate.

This turned out to be a problem, as we weren't getting enough feedback about the sheer amount of bad input data we were simply skimming over, resulting in gaps in the data being loaded into the new system.

So in the next phase of development, we became willingly, brutally "fragile", throwing an error as soon as we encountered input data that wasn't ideal. This would obviously result in a lot of failed ETL jobs, but it alerted us to the problems which we could then mitigate in the source system or with code fixes (and unit tests) as needed.

Interestingly, it turned out that in the "long tail" of the customer migration project, we had to return back (somewhat) to the "permissive mode" in order to get particularly difficult customer accounts to be migrated. The approach at that point was to migrate them with known holes in their data, and fix them in the TARGET system.

Here's my crude visualisation of it. I don't know if this mode of code evolution has a name but I found it interesting.

Sunday, 16 April 2023

Micro-Optimisation #393: More Log Macros!

I've posted some of my VSCode Log Macros previously, but wherever there is repetitive typing, there are further efficiencies to be gleaned!

Log, Label and Prettify a variable - [ Ctrl + Option + Command + J ]

You know what's better than having the contents of your console.log() autogenerated?

Having the whole thing inserted for you!

How do I add this?

On the Mac you can use ⌘-K-S to see the pretty shortcut list, then hit the "Open Keyboard Shortcuts (JSON)" icon in the top-right to get the text editor to show the contents of keybindings.json. And by the way, execute the command Developer: Toggle Keyboard Shortcuts Troubleshooting to get diagnostic output on what various special keystrokes map to in VSCode-speak (e.g. on a Mac, what Ctrl, Option and Command actually do)

keybindings.json

// Place your key bindings in this file to override the defaults

[

{

"key": "ctrl+meta+alt+j",

"when": "editorTextFocus",

"command": "runCommands",

"args": {

"commands": [

{

"command": "editor.action.copyLinesDownAction"

},

{

"command": "editor.action.insertSnippet",

"args": {

"snippet": "\nconsole.log(`${TM_SELECTED_TEXT}: ${JSON.stringify(${TM_SELECTED_TEXT}$1, null, 2)}`);\n"

}

},

{

"command": "cursorUp"

},

{

"command": "editor.action.deleteLines"

},

{

"command": "cursorDown"

},

{

"command": "editor.action.deleteLines"

},

],

}

}

]

This one uses the new (for April 2023, VSCode v1.77.3) runCommands command, which, as you might infer, allows commands to be chained together in a keybinding. A really nice property of this is that you can Command-Z your way back out of the individual commands; very helpful for debugging the keybinding, but also potentially just nice-to-have.

The trick here is to retain the text selection so that ${TM_SELECTED_TEXT} can continue to contain the right thing, without clobbering whatever might be in the editor clipboard at this moment. We do this by copying the line down. This helpfully keeps the selection right on the variable where we want it. We then blast over the top of the selection with the logging line, but by sneakily inserting \n symbols at each end, we break up the old line into 3 lines, where the middle one is the only one we want to keep. So we delete the above and below.

Saturday, 25 March 2023

In praise of ETL, part one; E's are good

I've written previously about how at work I've been using an ETL (Extract, Transform, Load) process for customer migrations, but that was mostly in the context of a use case for AWS Step Functions.

Now I want to talk about ETL itself, and how good it's been as an approach. It's been around for a while so one would expect it to have merits, but I've found some aspects to be particularly neat and wanted to call them out specifically. So here we go.

An Extract is a perfect test fixture

I'd never realised this before, but the very act of storing the data you plan on Transforming, and Loading, is tremendously powerful. Firstly, it lets you see exactly what data your Transform was acting upon; secondly, it gives you replay-ability using that exact data (if that's what you want/need) and thirdly, you've got an instant source of test fixture data for checking how your transform code handles that one weird bug that you just came across in production.

My workflow for fixing transform-stage bugs literally became:

- Locate JSON extract file for the process that failed

- Save as local JSON file in test fixtures directory of the transform code

- Write a test to attempt to transform this fixture (or sub-component of it)

- Test should fail as the production code does

- Fix transform code, test should now pass

- Commit fixed code, new test(s) and fixture

- Release to production

- Re-run ETL process; bug is gone

Monday, 27 February 2023

Stepping up, and back, with the new Next.js "app" directory

I'm toying around with a new web-based side project and I thought it was time to give the latest Next.js version a spin. Although I've used Create-React-App (generally hosted on Netlify) more recently, I've dabbled with Next.js in one capacity or another since 2018, and this time some server-side requirements made it a better choice.

The killer feature of the 2023 "beta version" of Next.js (which I assume will eventually be named Next.js 14) is the app directory, which takes Next's already-excellent filesystem-based routing (i.e. if you create a file called bar.tsx in a directory called foo, you'll find it served up at /foo/bar without writing a line of code) and amps it up. A lot.

I won't try and reiterate their excellent documentation, but their nested layouts feature is looking like an absolute winner from where I'm sitting, and I'd like to explain why by taking you back in time. I've done this before when talking about React-related stuff when I joked that the HTML <img> tag was like a proto-React component. And I still stand by that comparison; I think this instant familiarity is one of the fundamental reasons why React has "won" the webapp developer mindshare battle.

Let me take you back to 1998. The Web is pretty raw, pretty wild, and mostly static pages. My Dad's website is absolutely no exception. I've meticulously hand-coded it in vi as a series of stand-alone HTML pages which get FTP'ed into position on his ISP's web server. Although I'm dimly aware of CSS, it's mainly still used for small hacks like removing the underlines from links (I still remember being shown the way to do this with an inline style tag and thinking it would never take off) - and I'm certainly not writing a separate .css file to be included by every HTML file. As a result, everything is styled "inline" so-to-speak, but not even in the CSS way; just mountains of widths and heights and font faces all over the place. It sucked, but HTML was getting better all the time so we just put up with it, used all that it offered, and automated what we could. Which was exactly what I did. If you dare to inspect the source of the above Wayback Machine page, you'll see that it uses HTML frames (ugh), which was a primitive way of maintaining a certain amount of UI consistency while navigating around the site.

The other thing I did to improve UI consistency, was a primitive form of templating. Probably more akin to concatenation, but I definitely had a header.htm which was crammed together with (for-example) order-body.htm to end up with order.htm using a DOS batch file that I ran to "pre-process" everything prior to doing an FTP upload - a monthly occurrence as my Dad liked to keep his "new arrivals" page genuinely fresh. Now header.htm definitely wasn't valid HTML as it would have had unclosed tags galore, but it was re-used for several pages that needed to look the same, and that made me feel efficient.

And this brings me to Next.js and the nesting layouts functionality I mentioned before. To achieve what took me a pile of HTML frames, some malformed HTML documents and a hacky batch file, all I have to do is add a layout.tsx and put all the pages that should use that UI alongside it. I can add a layout.tsx in any subdirectory and it will apply from there "down". Consistency via convention over configuration, while still nodding to the hierarchical filesystem structures we've been using since Before The Web. It's just really well thought-out, and a telling example of how much thought is going into Next.js right now. I am on board, and will be digging deeper this year for sure.